Tutorial Talk

Tensor Networks for Density Estimation:

The merits of low-rank structures, tractable inference, and uncertainty quantification

17 Aug. 2026 @ KIT Royal Tropical Institute in Amsterdam

Conference on Uncertainty in Artificial Intelligence (UAI 2026)

Presenter: Kazu Ghalamkari and Morten Mørup

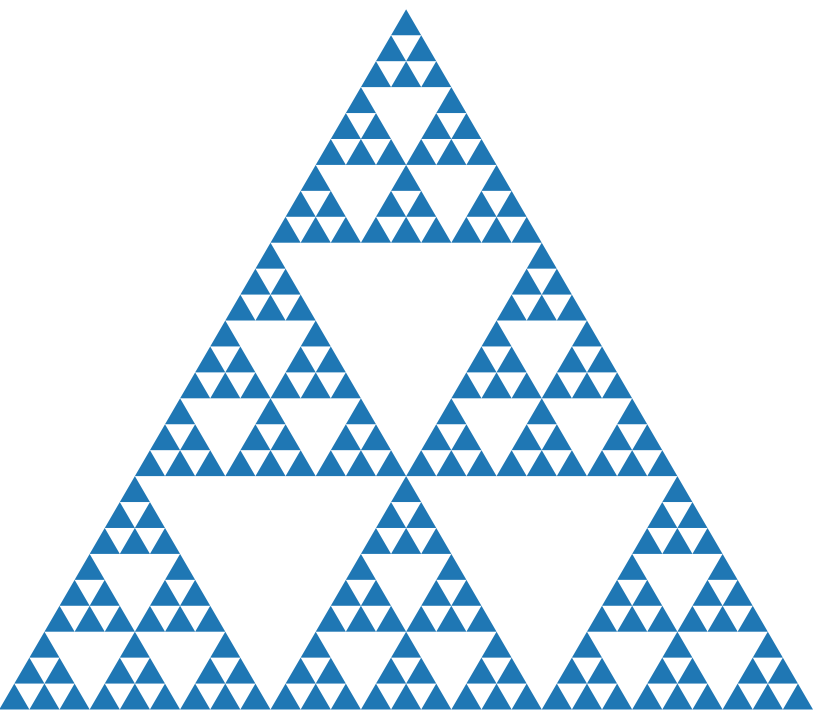

How can we estimate the true distribution underlying the given data? This is one of the fundamental questions in machine learning. With the current advances in GPU accelerators, the community relies heavily on deep learning-based density estimation, which offers great success in expressivity and scalability; however, theoretical intractability, the need for costly hyper-parameter tuning, weaker optimization guarantees, and the non-trivial extension to the discrete settings remain important challenges. Recently, alternative approaches for density estimation, centered on the use of tensor networks, are garnering attention as they can overcome these difficulties. In addition, their connections have also recently been established to other fields such as probabilistic circuits, information geometry, logic programming, and relational learning, forming a rich community in which tensors play a role of shared language, as seen in recent tensor-related workshops, Connecting Low-Rank Representations in AI at AAAI’25 and ICML’26, and a tutorial, Foundations of Tensor/Low-Rank Computations for AI at Neurips 2025. Given the current situation, we presently provide a tutorial on tensor-based density estimation where its exact marginalization, natural Bayesian extension, and convergence guarantees directly match the interests of the UAI community. Aiming to welcome newcomers as well as bridging various fields, this tutorial covers the following topics: i) How tensors are useful for density estimation, ii) how tensor-based density estimation connects diverse fields, and iii) what are important future directions of tensor networks for the UAI community.

Student projects

Scalable Tensor Decomposition beyond Euclidean Formulation

Feb. 2026 – Jun. 2026

Bachelor of Artificial Intelligence and Data (02466 Project Work) in DTU Compute, 20ECT, 1 Undergraduate student

Supervisor: Kazu Ghalamkari and Morten Mørup

Tensor (multidimensional array) is a fundamental data structure in machine learning. We can extract patterns, relevant information, and features underlying data by decomposing tensor-formatted data into a sum of product form. However, naïve implementation of this task, minimizing the Frobenius error, inherits ill-posedness and NP-hardness even in the simplest rank-1 setting (R=1). Nowadays, to overcome these challenges, our teams have introduced two alternative suitable reformulations: (i) extending EM (Expectation-Maximization) based tensor decomposition and (ii) deformed decomposition based on deformed algebra. We offer research opportunities to scale these decompositions to large datasets on a practical problem setup using a stochastic-based method, and to investigate the model’s behavior in an overparameterized regime. We further explore the application of these decompositions.

A Comparative Analysis of Classification Approaches for Discrete Data

Feb. 2026 – Jun. 2026

Bachelor of Artificial Intelligence and Data (02466 Project Work) in DTU Compute, 10 ECT × 2 Undergraduate students

Supervisor: Mads Emil Koefoed Rehof, Kazu Ghalamkari, and Morten Mørup

Lectures

Advanced Topics in Machine Learning: Tensor Networks for Machine Learning, 2026

27th Aug. 2026, 1:00 pm – 4:00 pm @ Summer school in DTU (Lyngby campus)

Tensor Networks for Density Estimation Beyond KL Divergence

How can we estimate the true distribution underlying observed data? This is a fundamental question in machine learning. In this lecture, I will show that tensor decomposition is particularly well suited to discrete (categorical) density estimation, because it can naturally exploit the discreteness of tensor indices. We will then discuss recent developments in tensor-based density estimation beyond the Kullback–Leibler (KL) divergence, with a focus on improving robustness to noise and outliers. Although the KL divergence is often easier to optimize than other divergences, it is known to be less robust to outliers and noise. To address this issue, we will see efficient closed-form optimization methods based on a doubly bounded EM algorithm, as well as a relaxation approach to density estimation that uses deformed algebra to flatten the feasible set, thereby enabling iterative convex optimization. We will also discuss the limitations of conventional low-rank modeling approaches and introduce tensor many-body decomposition as an alternative energy-based modeling for density estimation.

Special Topics in Mechano-Informatics Ⅱ@ Tokyo University, 2024

5th Jun. 2024, 2:55 pm – 4:40 pm, Online

Matrix and tensor factorization for machine learning [Slides] [PDF]

Matrices and tensors, or multidimensional arrays, are highly versatile data structures. They can store a variety of formats: image, video, table, and sensing data. By decomposing such matrices and tensors, we can extract insight from the data: patterns, features, etc. In this lecture, I introduce singular value decomposition (SVD) for matrices, non-negative matrix factorization (NMF) for non-negative matrices, and CP and Tucker decomposition for tensors. The objective is not only to understand the various decomposition methods in a piecemeal manner but also to acquire the know-how to select the appropriate decomposition method according to the situation and various constraints in real-world scenarios while focusing on the properties of each decomposition. For downstream applications of the methods, the lecture will cover subspace methods (CLAFIC) for classification tasks and EM-based methods for determining missing values in given input data. In the last segment of the lecture, I will cover some of the difficulties of tensor decomposition (TD). TD suffers from difficulties that do not occur in SVD, such as ill-posedness and NP-hardness in optimization, and will discuss recent research trends for avoiding these difficulties.

物理屋のための機械学習講義

17 Jun. 2024, 1:00 pm – 5:00 pm, 筑波大学東京キャンパス118教室

第11回 行列・テンソルの低ランク分解と多体分解 [Slide]

行列やテンソル(多次元配列)はデータのもつ高次の自由度を自然に記述できる基本的なデータ構造である.行列・テンソルとして計算機に格納されたデータを少ない基底の線形結合で近似する低ランク分解によって,データから必要な情報を抽出したり,隠れたパターンや知識を発見することができる.本講義の前半では主に機械学習への応用を見据えて,行列・テンソルの低ランク分解に入門する.特異値分解(SVD),非負行列因子分解(NMF),テンソルのCP分解やタッカー分解を扱い,実世界の様々な制約に応じて適切な分解法を選択する方法についても議論する.後半では,テンソル分解に現れる不良設定性やNP困難性に着目し,これらの困難を克服する最近の研究として,テンソルの低ランク性ではなく,テンソルの軸(モード)間の高次の相互作用に着目するテンソル多体分解を導入する.テンソル多体分解では,モデルに可視変数のみを仮定するため,直感的なモデル選択が可能になる上に,安定な凸最適化問題としてテンソル分解を定式化できる.また,低ランク分解と多体分解の数理的な関係についても紹介する.

データサイエンスのための行列分解入門

11 Jan. 2024, 國學院大學 データサイエンス2023 (第13回 ゲスト講師としての講義)

09 Jan. 2025, 國學院大學 データサイエンス2024 (第13回 ゲスト講師としての講義)

08 Jan. 2026, 國學院大學 データサイエンス2025 (第13回 ゲスト講師としての講義)

画像や購買記録など,実世界の様々なデータが二次元配列として計算機に格納される.こうしたデータを線形代数で扱う行列とみなし,積の形に分解することによって,データからパターンや特徴を抽出できる.このようにして得られたパターンは様々なアプリケーションに活用できる.本講義では,分解表現に正規直交性を課す特異値分解(SVD)と,非負制約を課すことで解釈性を高める非負行列因子分解(NMF)を導入する.また,データの分解に基づく部分空間法(CLAFIC)によって,分類,ノイズ除去,異常検知などのアプリケーションが実現できることを示す.更に,データが線形分離できない場合の処方箋として,カーネル部分空間法(Kernel CLAFIC)についても解説する.尚,行列分解では,行列のランク(階数)と呼ばれる量が重要な意味をもつ.そのため本講義の冒頭では,線形代数学の初学者の為に,ランクの定義の復習も行う.