General motivation and big picture

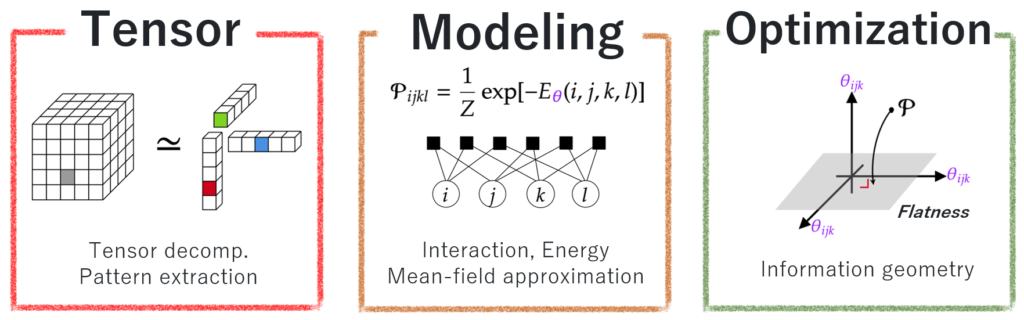

My primary research goal is to develop a systematic machine learning framework grounded in physical concepts. While modern machine learning has achieved great success, it often relies on empirical black-box methods that suffer from ad hoc model selection, local minima issues, and extensive hyperparameter tuning. I aim to replace these heuristics with a systematic approach by leveraging core concepts from physics, including energy, interactions, temperature, and entropy. I believe that by formulating machine learning in a common language with physics, we can bring the generality of physical principles into machine learning, which allows us to develop a systematic framework that is inherently more predictable and robust than traditional trial-and-error approaches.

However, a naïve bridging between physics and machine learning often introduces additional complexities. To address this issue, I employ information geometry, which provides the concept of flatness within models and data. This flatness gives us insight to reformulate the target problems into convex optimizations, preserving mathematical tractability while enabling a systematic formulation informed by physics-grounded intuition. As seen below, I have demonstrated these ideas in tensor factorization and tempered Expectation Maximization (EM) algorithm, and will further enhance their expressivity via advanced concepts from non‑equilibrium statistical mechanics.

Many-body approximation for tensors

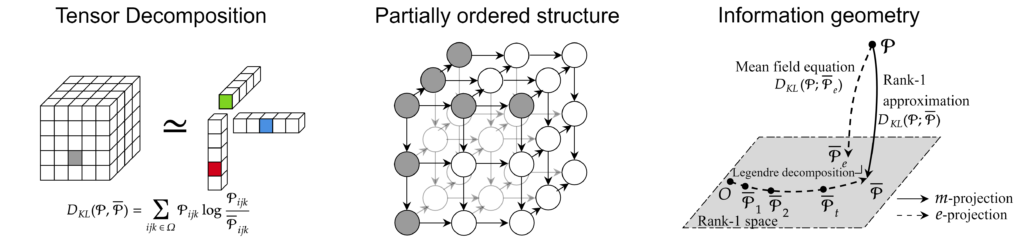

Tensor factorization has fundamental difficulties in rank tuning and optimization. To avoid these difficulties, we develop a rank-free energy-based tensor factorization, called many-body approximation, that allows intuitive modeling of tensors and global optimization. Our approach models tensors as distributions via the energy function, which describes interactions between modes, and a dually flat statistical manifold is induced.

Related papers

– K.Ghalamkari, et al., “Many-body Approximation for Non-negative Tensors” presented in NeurIPS2023.

– K.Ghalamkari, et al., “Deformed decomposition for Non-negative Tensors” presented in AISTATS2026.

Faster rank-1 NMF with missing values

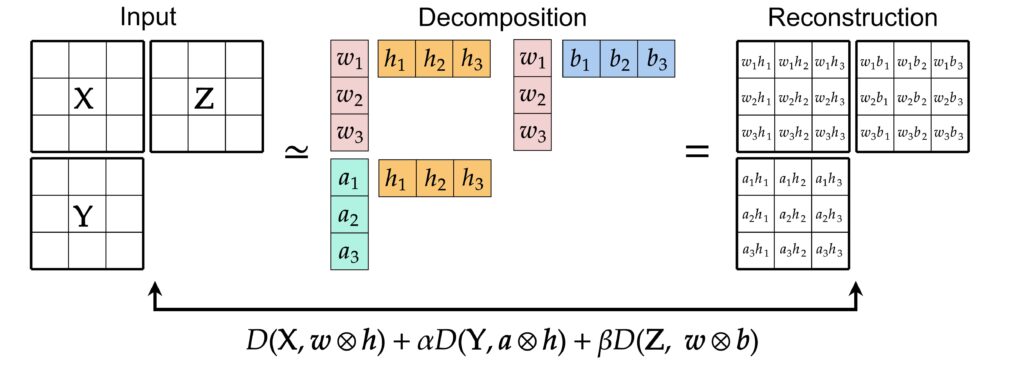

Nonnegative multiple matrix factorization (NMMF) is the task of decomposing multiple nonnegative matrices by shared factors. We have derived a closed formula of the best rank-1 NMMF when the cost function is defined by KL divergence. Using this solution formula, we proposed A1GM, a faster method to find approximate solutions of rank-1 NMF when the input matrix has missing values.

Related papers

– K.Ghalamkari, et al., “Fast Rank-1 NMF for Missing Data with KL Divergence” presented in AISTATS2022.

– K.Ghalamkari, et al., “Non-negative low-rank approximations for multi-dimensional arrays on statistical manifold” pubulished in Information Geometry (Springer)

Tensor low-rank approximation

Tensor low-rank approximation is the task of approximating a tensor (multidimensional array) with a low-rank tensor. To date, most low-rank approximation methods are based on gradient descent using the derivative of the cost function, which often requires careful tuning of initialization, learning rate, and a tolerance threshold. By mapping tensors to probability distributions and applying the theory of projection in information geometry, we have developed Legendre Tucker Rank Reduction (LTR), a fast non-negative low-rank approximation method that is not based on the gradient method. From the viewpoint of information geometry, we also explain that we can regard the rank-1 approximation as a mean-field approximation because the probability distribution corresponding to the rank-1 tensor is expressed as a product of independent distributions.

Related papers

– K.Ghalamkari, et al., “Fast Tucker Rank Reduction for Non-Negative Tensors Using Mean-Field Approximation” presented in NeurIPS2021.

– K.Ghalamkari, et al., “Non-negative low-rank approximations for multi-dimensional arrays on statistical manifold” pubulished in Information Geometry (Springer)

Valley polarization in layer material by light absorption

I received my master’s degree in theoretical condensed matter physics in hexagonal lattice systems such as graphene, h-BN, Silicene, and Stanene.

Hexagonal lattice systems, in which two atoms in a unit cell are different atoms, have K valleys and K’ valleys in the Brillouin zone. It is known that the electrons in the K(K’) valleys are excited when left (right) circularly polarized light is incident in this system. The system is said to be valley polarized when there is a bias in which valley electrons are more likely to be excited depending on the polarization state of the irradiated light. I have analytically demonstrated valley polarization in a hexagonal lattice system consisting of atoms with complex electronic orbitals such as TMDs [1]. We also provide an analytical explanation of how the valley polarization varies with the energy band gap and incident energy [2]. Furthermore, I have clarified the relationship between band inversion and valley polarization by studying the presence or absence of valley polarization in the Haldane model [3].

[1] Y.Tatsumi, et al. Laser energy dependence of valley polarization in transition-metal dichalcogenides, Phys. Rev. B 94, 235408 (2016)

[2] K.Ghalamkari, et al. Energy Band Gap Dependence of Valley Polarization of the Hexagonal Lattice, J. Phys. Soc. Jpn. 87, 024710 (2018)

[3] K.Ghalamkari, et al. Perfect circular dichroism in the Haldane model, J. Phys. Soc. Jpn. 87, 063708 (2018)